Sidebar

Actually Useful AI

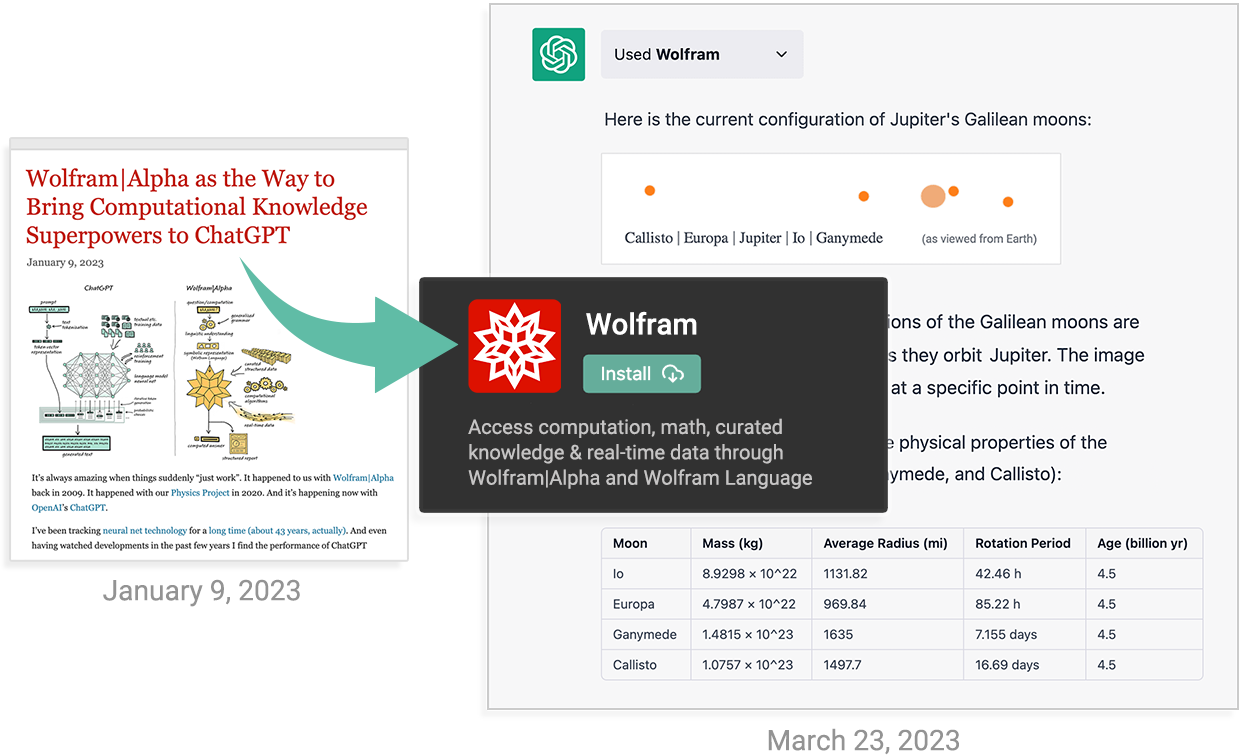

writings.stephenwolfram.com

writings.stephenwolfram.com

ChatGPT—for all its remarkable prowess in textually generating material “like” what it’s read from the web, etc.—can’t itself be expected to do actual nontrivial computations, or to systematically produce correct (rather than just “looks roughly right”) data, etc. But when it’s connected to the Wolfram plugin it can do these things.

From https://scribe.rip/@dimillian/adding-ai-generated-image-description-to-ice-cubes-c4e7990a5915 > I’ve recently released a new feature for Ice Cubes, and users have > loved it! On Mastodon, it’s considered an excellent ethic to add media > descriptions when posting medias on the network. It’s necessary for > visually impaired people but also for anyone who would want to get > more detail about the image. Ice Cubes is an open-source Mastodon app. Under the hood it uses OpenAI's Vision API. (Source article: https://dimillian.medium.com/adding-ai-generated-image-description-to-ice-cubes-c4e7990a5915)

I recently built a web scraper / website to track changes in Burger King prices. I also made a video where I try to describe how I used ChatGPT in this process and how useful it was to me.

I've been toying with this idea for a while and made a few attempts following tutorials with some very basic coding skills, but honestly I find a lot of the resources and information quite overwhelming to take in sometimes so thought I would ask you guys with more experience here instead! I have ADHD and often find myself juggling many creative tasks from writing music, writing fiction, graphic design, etc all the time. I find the "load" of all of these different spinning plates in my brain to be a lot to hold onto at times and have found the benefits in using ChatGPT for simplifying things or even just working through my own thought processes, and wanted to take this further. To my knowledge, Chatgpt has a limited memory/recall ability, and after particularly lengthy conversations, it is unable to recall information from the beginning and starts guessing. Certainly with GPT 3.5 anyway. I also have never been too comfortable sharing much in terms of my creative projects with GPT for privacy reasons. I have been trying to figure out if a localised model such as GPT4all or OLlama would be able to function better for my needs. As an example, one of my largest projects is a fantasy fiction novel where I have spent so much time worldbuilding that my notes are quite extensive, and the connections between aspects can be difficult for me to keep track of and can sometimes result in me writing contradictions and having to spend time fixing my own errors. I do have StableDiffusion on my PC that I use to generate artwork to help me visualise people/the world (not intended for publication, obviously, I'd hire a real artist for that) and a fair bit of experience with it. With GPT4all, or an equivalent, would it be possible for me to train a model on all of my notes and information, saved in a separate document, that I can discuss/collaborate with the model, keeping everything private, offline, and without having the model "forget" things? Apologies if this is a really basic question that I have turned into a wall of text. But any insight you guys might have, or structured resources you can point me towards would be brilliant.

**Bot:** Hi, I'm Cricut Chatbot. 👋 How can I help you? **Bot:** Please select a topic, or feel free to ask me a question **Me:** Hi hello, I just tried to load a project and my Cricut software showed a message "Project Open Unsuccessful: TypeError: Cannot read properties of undefined (reading 'layerData')". Can you help? **Bot:** Please clarify, here's what I found 👇 (Two options, I select "Javascript error messages") **Bot:** Are you talking to me on the same device you wish to troubleshoot with? **Me:** Yes **Bot:** Which Cricut machine are you using? **Me:** (I select "Cricut Maker") **Bot:** JavaScript error messages are very diverse, but they are often fixed the same way. 🙂 **Bot:** Installing Design Space over your current version is the most normal fix. **Bot:** (Link: Download Design Space) **Bot:** Replace the existing file rather than making a copy of Design Space. 🗃 **Me:** (Selects "It works!" from the did-it-work options) **Bot:** Great — happy to help! --- Absolutely amazing.

github.com

github.com

Hey all, brand new to this community, excited to be here! I've stumbled my way through SD and I currently also have text-generation-webui up and running, and now SillyTavern. Having lots of fun with all of this stuff, learning how it works together, and how it all works! I've made a few models elsewhere, but TTS models for some reason I'm having issues wrapping my head around. I have a voice I want to make a model for, and I have some videos currently. I'm very familiar with editing audio and video, but stripping out their voice second by second sounds exhausting tbh. I was wondering if anyone had any good guides on their process of making a TTS model? Are there steps that can be automated while still producing decent results? How much time do I need of a person speaking? Should I run any specific tools to clean up audio? I'm completely new so any and all advice would be great. I want to run it locally and "plug it in" to my cluster already, so also I'll need the model to work with a tool that will work with the above programs (and I'll take advice there too if you have it!) Thanks!

arstechnica.com

arstechnica.com

Trove of combos is \>45 times larger than number unearthed in entire history of science.

Hi all, As per the title, I'd love to give this a crack. Also, I'm doing so on my laptop, which has an Intel card in it, if that makes any difference? Any advice would be greatly appreciated.

github.com

github.com

*Permanently Deleted*

Wanted to share this one because I find myself using it every day—often in place of Google. You can even somewhat replace Siri with it using the app’s Apple Shortcuts:  Note: I haven’t read the privacy policy or terms and do suspect that there is some form of data collection.

ollama.ai

ollama.ai

If you’re just wanting to run LLMs quickly on your computer in the command line, this is about as simple as it gets. Ollama provides an easy CLI to generate text, and there’s also a [Raycast extension](https://www.raycast.com/massimiliano_pasquini/raycast-ollama) for more powerful usage.

Found this online tool to try out a bunch of different text2image models for free. No signup needed. Resolution is fixed to 512x512, but otherwise it’s pretty versatile for being free

I'm not sure if it's intentional or not, but the Actually Useful AI community rarely gets any post on AI that's actually useful. Is this just a reflection of the popularity of this community or a reflection of the AI field in general?

pytorch.org

pytorch.org

I was aiming to use LLMs with robotics in an upcoming project, and needed to first verse myself in what is the current must-know techniques in the space. To that end I read a ton of papers and wrote this article to try and suss out the best parts of current state of the art. I hope this helps people; I'd be thrilled to discuss much of this as well!

I've been really impressed with how well perplexity answers my search queries. It seems to understand what I'm asking for and returns very relevant results. I would have expected more competition in the AI search engine space by now. The big players like YouChat, Bing, and others don't seem to be trying to match perplexity's capabilities. I'm surprised there aren't more free public AI search options that are more accurate than perplexity. Are there any good alternatives out there that I'm missing? I'd love to find other AI search engines that can match or exceed perplexity.

cross-posted from: https://lemmy.intai.tech/post/215134 > Blog Post: https://cloudblogs.microsoft.com/opensource/2023/08/01/introducing-onnx-script-authoring-onnx-with-the-ease-of-python/