genesis

7 months ago

•

100%

genesis

7 months ago

•

100%

It seems to me that AI won't completely replace jobs (but will do in 10-20 years). But will reduce demand because oversaturation + ultraproductivity with AI. Moreover, AI will continue to improve. A work of a team of 30 people will be done with just 3 people.

Why are the microblogs not working properly? [\#kbinMeta](https://kbin.social/tag/kbinMeta)

genesis

11 months ago

•

100%

genesis

11 months ago

•

100%

I rather avoid anything related to Red Hat. On the other hand, Debian 12 looks epic.

genesis

12 months ago

•

100%

genesis

12 months ago

•

100%

I'll accept it if it's FOSS

Everything is Linux

github.com

github.com

Hello 👋, Just came across this project, and saw the app being advertised as open source, like on this page where it's advertised as "100% open source". Looking at the app repo though, the licensing...

www.scientificamerican.com

www.scientificamerican.com

AI is poised to revolutionize our understanding of animal communication

www.scientificamerican.com

www.scientificamerican.com

AI is poised to revolutionize our understanding of animal communication

Training AI models like GPT-3 on "A is B" statements fails to let them deduce "B is A" without further training, exhibiting a flaw in generalization. ([https://arxiv.org/pdf/2309.12288v1.pdf](https://arxiv.org/pdf/2309.12288v1.pdf)) **Ongoing Scaling Trends** * 10 years of remarkable increases in model scale and performance. * Expects next few years will make today's AI "pale in comparison." * Follows known patterns, not theoretical limits. **No Foreseeable Limits** * Skeptical of claims certain tasks are beyond large language models. * Fine-tuning and training adjustments can unlock new capabilities. * At least 3-4 more years of exponential growth expected. **Long-Term Uncertainty** * Can't precisely predict post-4-year trajectory. * But no evidence yet of diminishing returns limiting progress. * Rapid innovation makes it hard to forecast. TL;DR: Anthropic's CEO sees no impediments to AI systems continuing to rapidly scale up for at least the next several years, predicting ongoing exponential advances.

Paper: [https://arxiv.org/abs/2309.07124](https://arxiv.org/abs/2309.07124) Abstract: > > > Large language models (LLMs) often demonstrate inconsistencies with human preferences. Previous research gathered human preference data and then aligned the pre-trained models using reinforcement learning or instruction tuning, the so-called finetuning step. In contrast, aligning frozen LLMs without any extra data is more appealing. This work explores the potential of the latter setting. We discover that by integrating self-evaluation and rewind mechanisms, unaligned LLMs can directly produce responses consistent with human preferences via self-boosting. We introduce a novel inference method, Rewindable Auto-regressive INference (RAIN), that allows pre-trained LLMs to evaluate their own generation and use the evaluation results to guide backward rewind and forward generation for AI safety. Notably, RAIN operates without the need of extra data for model alignment and abstains from any training, gradient computation, or parameter updates; during the self-evaluation phase, the model receives guidance on which human preference to align with through a fixed-template prompt, eliminating the need to modify the initial prompt. Experimental results evaluated by GPT-4 and humans demonstrate the effectiveness of RAIN: on the HH dataset, RAIN improves the harmlessness rate of LLaMA 30B over vanilla inference from 82% to 97%, while maintaining the helpfulness rate. Under the leading adversarial attack llm-attacks on Vicuna 33B, RAIN establishes a new defense baseline by reducing the attack success rate from 94% to 19%. > > Source: [https://old.reddit.com/r/singularity/comments/16qdm0s/rain\_your\_language\_models\_can\_align\_themselves/](https://old.reddit.com/r/singularity/comments/16qdm0s/rain_your_language_models_can_align_themselves/)

[https://arxiv.org/abs/2309.11495](https://arxiv.org/abs/2309.11495) **Abstract** Generation of plausible yet incorrect factual information, termed hallucination, is an unsolved issue in large language models. We study the ability of language models to deliberate on the responses they give in order to correct their mistakes. We develop the Chain-of-Verification (CoVe) method whereby the model first (i) drafts an initial response; then (ii) plans verification questions to fact-check its draft; (iii) answers those questions independently so the answers are not biased by other responses; and (iv) generates its final verified response. In experiments, we show CoVe decreases hallucinations across a variety of tasks, from list-based questions from Wikidata, closed book MultiSpanQA and longform text [https://i.imgur.com/TDXcdMI.jpeg](https://i.imgur.com/TDXcdMI.jpeg) [https://i.imgur.com/XfRVxJT.jpeg](https://i.imgur.com/XfRVxJT.jpeg) **Conclusion** We introduced Chain-of-Verification (CoVe), an approach to reduce hallucinations in a large language model by deliberating on its own responses and self-correcting them. In particular, we showed that models are able to answer verification questions with higher accuracy than when answering the original query by breaking down the verification into a set of simpler questions. Secondly, when answering the set of verification questions, we showed that controlling the attention of the model so that it cannot attend to its previous answers (factored CoVe) helps alleviate copying the same hallucinations. Overall, our method provides substantial performance gains over the original language model response just by asking the same model to deliberate on (verify) its answer. An obvious extension to our work is to equip CoVe with tool-use, e.g., to use retrieval augmentation in the verification execution step which would likely bring further gains. Source: [https://old.reddit.com/r/singularity/comments/16qcdsz/research\_paper\_meta\_chainofverification\_reduces/](https://old.reddit.com/r/singularity/comments/16qcdsz/research_paper_meta_chainofverification_reduces/)

medicalxpress.com

medicalxpress.com

When the spinal cords of mice and humans are partially damaged, the initial paralysis is followed by the extensive, spontaneous recovery of motor function. However, after a complete spinal cord injury, this natural repair of the spinal cord doesn't occur and there is no recovery. Meaningful recovery after severe injuries requires strategies that promote the regeneration of nerve fibers, but the requisite conditions for these strategies to successfully restore motor function have remained elusive.

www.businessinsider.com

www.businessinsider.com

How Daron Acemoglu, one of the world's most respected experts on the economic effects of technology, learned to start worrying and fear AI.

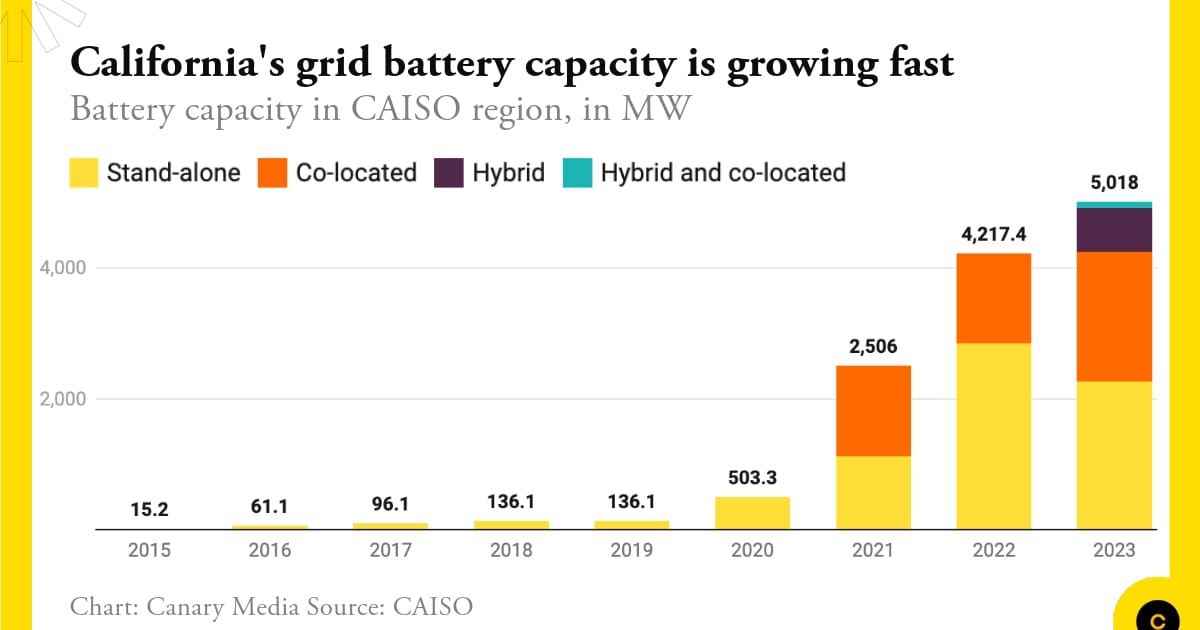

www.canarymedia.com

www.canarymedia.com

In 2020, California had 500 megawatts of grid battery storage. Now, just three years later, it has over 5,000 megawatts.

themessenger.com

themessenger.com

The mayor says they are paying less per hour than the city's minimum wage to lease the robot

www.businessinsider.com

www.businessinsider.com

How Daron Acemoglu, one of the world's most respected experts on the economic effects of technology, learned to start worrying and fear AI.

www.businessinsider.com

www.businessinsider.com

Will everything you learned in college be replaced by ChatGPT? The CEO of job site Indeed says it's not out of the question.

www.businessinsider.com

www.businessinsider.com

Will everything you learned in college be replaced by ChatGPT? The CEO of job site Indeed says it's not out of the question.

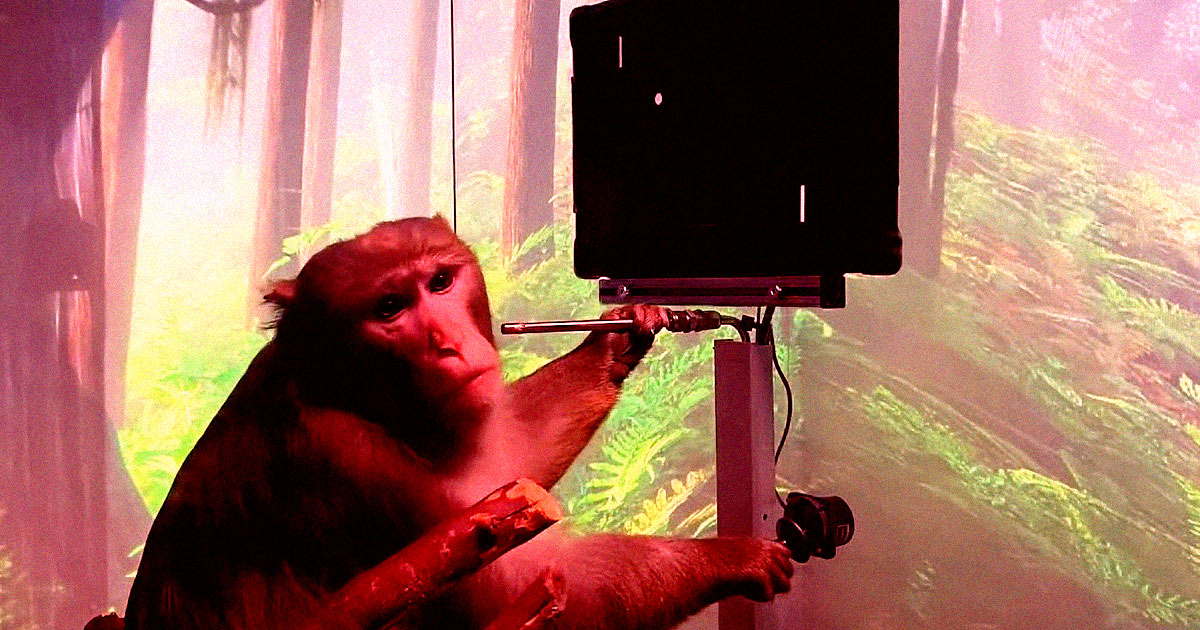

futurism.com

futurism.com

An investigation by Wired reveals the grisly complications of Neuralink brain implants in monkeys, including brain swelling and paralysis.

genesis

12 months ago

•

100%

genesis

12 months ago

•

100%

the Lemmy/kbin instances stability during June 12-14 is goofily accurate 💀

genesis

1 year ago

•

100%

genesis

1 year ago

•

100%

www.pcworld.com

www.pcworld.com

The latest updates to Google’s generative AI chat bot lets it dig through your personal email, documents, and more—so you can get things done faster.

www.pcworld.com

www.pcworld.com

The latest updates to Google’s generative AI chat bot lets it dig through your personal email, documents, and more—so you can get things done faster.

www.cnbc.com

www.cnbc.com

Many American CEOs say they're worried about their workplace's lack of AI skills, a new survey of C-suite executives and workers found. Here's why.

www.cnbc.com

www.cnbc.com

Many American CEOs say they're worried about their workplace's lack of AI skills, a new survey of C-suite executives and workers found. Here's why.

www.newsweek.com

www.newsweek.com

The pursuit of the most advanced AI—human-like artificial general intelligence—has prompted concerns among experts about potential dangers if it runs amok.

www.newsweek.com

www.newsweek.com

The pursuit of the most advanced AI—human-like artificial general intelligence—has prompted concerns among experts about potential dangers if it runs amok.

www.vox.com

www.vox.com

63 percent of Americans want regulation to prevent artificial general intelligence, or AGI, which OpenAI aims to build.

www.vox.com

www.vox.com

63 percent of Americans want regulation to prevent artificial general intelligence, or AGI, which OpenAI aims to build.

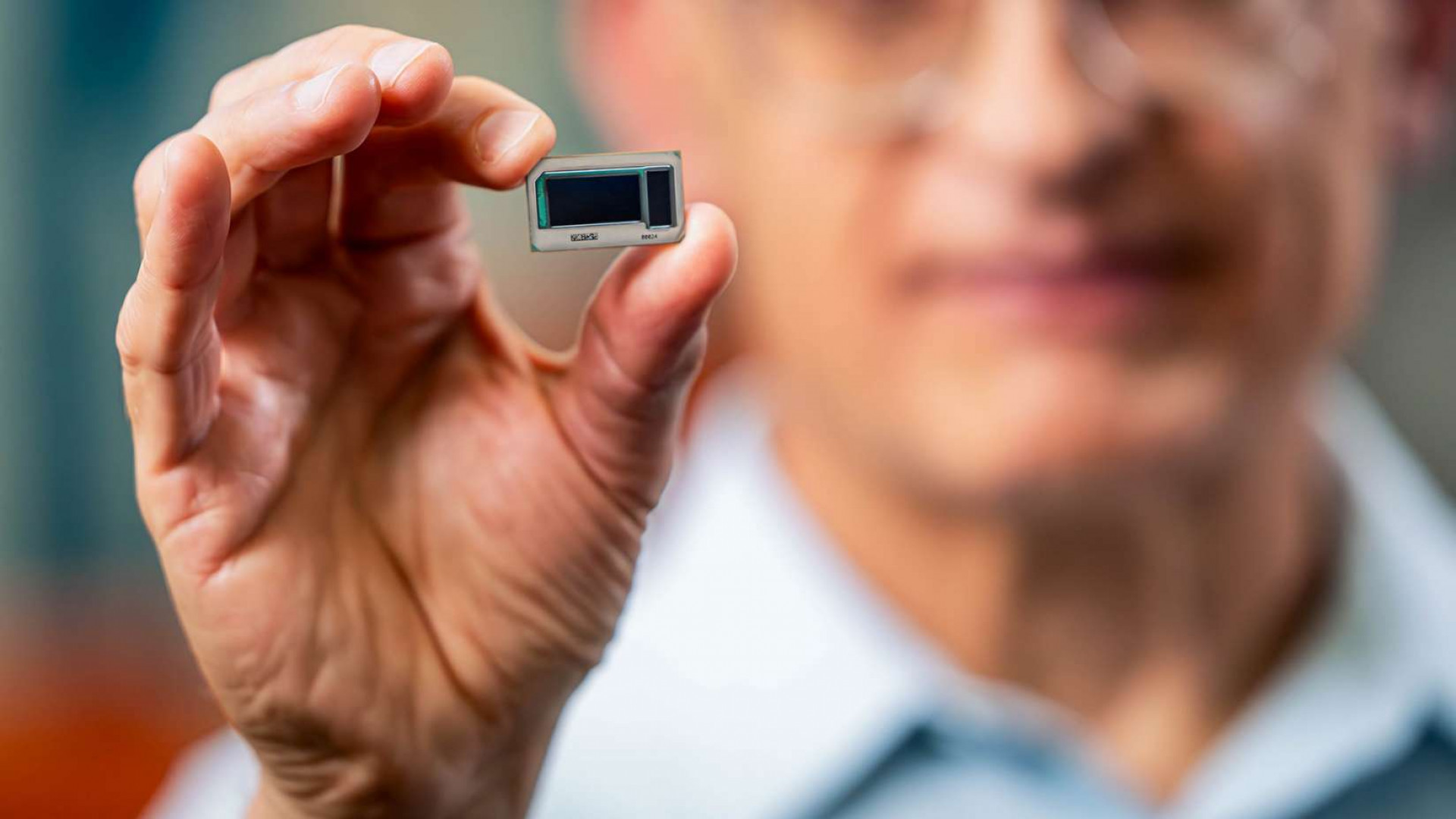

interestingengineering.com

interestingengineering.com

Intel said it has made a significant breakthrough in the development of glass substrates for next-generation advanced packaging.

neuralink.com

neuralink.com

Neuralink's Blog.

www.independent.co.uk

www.independent.co.uk

The latest generation of police surveillance tools are overused, underregulated and often completely wrong, opponents tell Josh Marcus and Alex Woodward

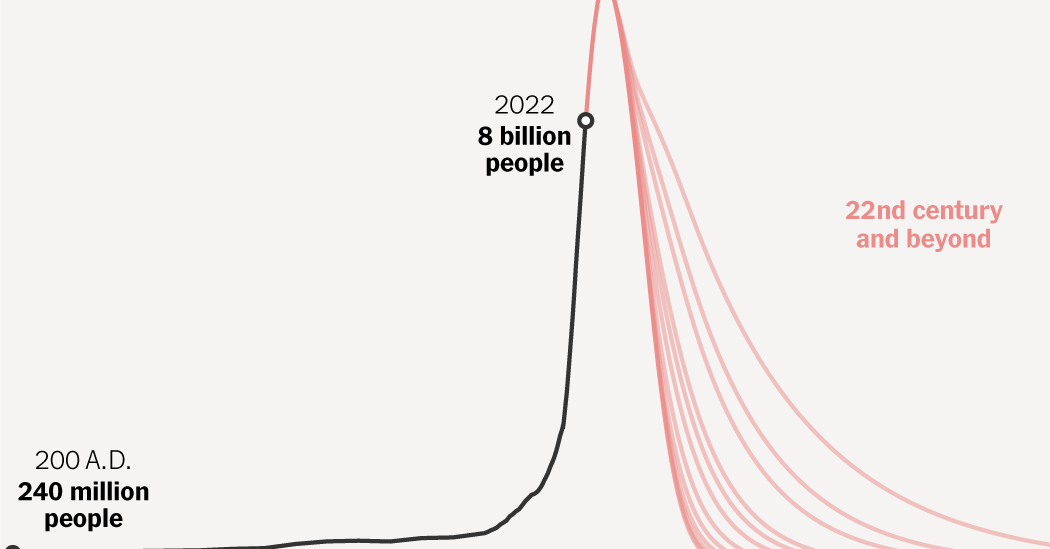

www.nytimes.com

www.nytimes.com

Most people now live in countries where two or fewer children are born for every two adults.

www.cnbc.com

www.cnbc.com

A first of its kind factory that will build humanoid robots is set to open in Salem, Oregon

genesis

1 year ago

•

100%

genesis

1 year ago

•

100%

hi

genesis

1 year ago

•

100%

genesis

1 year ago

•

100%

gimme chocolate!!

genesis

1 year ago

•

100%

genesis

1 year ago

•

100%

agreed. I'm also rlly skeptical about LK-99.

genesis

1 year ago

•

100%

genesis

1 year ago

•

100%

Space Colonization lore

genesis

1 year ago

•

100%

genesis

1 year ago

•

100%

RIP Aaron. You were the hero we needed but lost. Fuck those prosecutors. I hope your dream comes true one day.

genesis

1 year ago

•

0%

genesis

1 year ago

•

0%

Agreed. But it seems likely that the blackout will soon be extended since alot of people on YouTube advocated it.